On Tuesday, January 27, 2015 CTOvision publisher Bob Gourley hosted an event for federal big data professionals. The breakfast event focused on security for big data designs and featured the highly regarded security architect Eddie Garcia.

Eddie Garcia is chief security architect at Cloudera, a leader in enterprise analytic data management. Eddie helps Cloudera enterprise customers reduce security and compliance risks associated with sensitive data sets stored and accessed in Apache Hadoop environments. Working for the office of the CTO, Eddie also provides security thought leadership and vision to the Cloudera product roadmap.

As the VP of InfoSec and Engineering for Gazzang prior to its acquisition by Cloudera, Eddie architected and implemented secure and compliant Big Data infrastructures for customers in the financial services, healthcare and public sector industries to meet PCI, HIPAA, FERPA, FISMA and EU data security requirements. He was also the chief architect of the Gazzang zNcrypt product and is author of two patents for data security.

After a series of introductions from the audience, Garcia commenced his presentation. Garcia addressed the shift from “bringing data to compute, to bringing compute to data.” This shift ties in well with Cloudera’s enterprise data hub, built on Hadoop. Hadoop offers petabytes of data storage and can handle multiple workloads. Combined with Cloudera technology, it becomes a secure and powerful enterprise architecture.

Cloudera’s security model is based on four pillars of security: Perimeter, Access, Visibility and Data. This creates the most secure Hadoop distribution on the market.

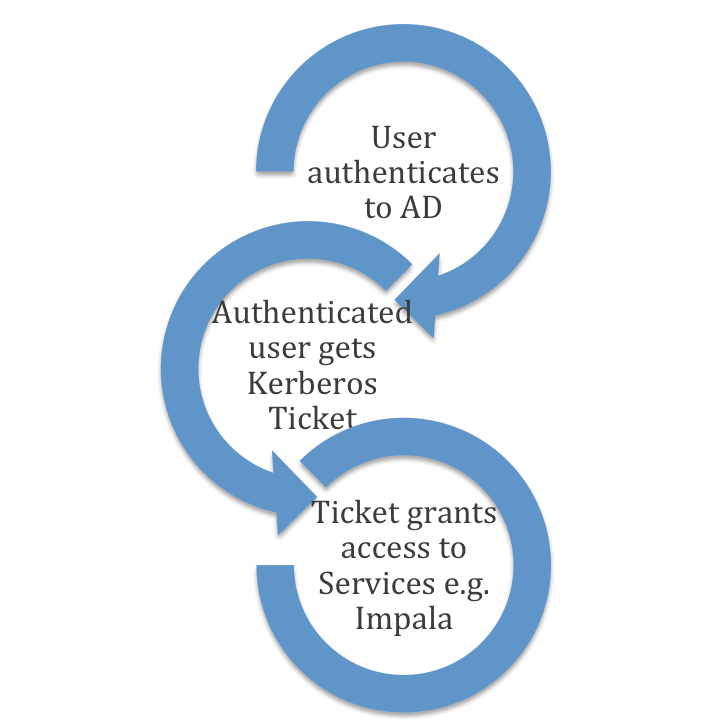

Authentication is addressed for the perimeter security requirements. Active Directory and Kerberos are the authentication staples within the enterprise, allowing all users to be authenticated. Each user is provided a username and password and groups control what services users have the ability to access.

Access security requirements define what individual users and applications can do with the data they receive. What this means is providing users access to data needed to do their job, centrally managed access policies and a leveraged role-based access control model built on Active Directory.

Cloudera delivers unified authorization with Apache Sentry. Sentry provides unified authorization via fine-grained RBAC today for Impala, Hive, HDFS, and Search. The goal is to provide unified authorization for all Hadoop services and third-party applications (such as Spark, Pig, MR, BI Tools, etc).

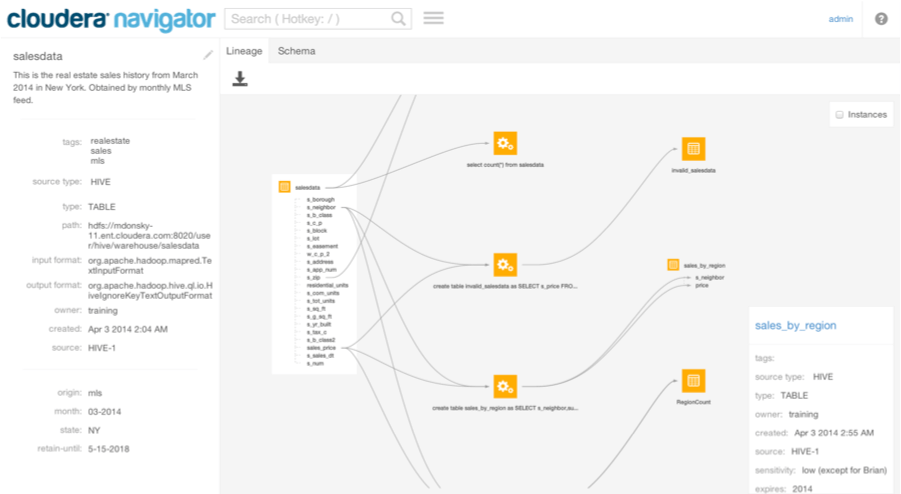

Visibility security requirements are the understanding of where data comes from and enabling discovery of more data like it. Policies for audit, data classification and lineage must be enforced, while centralizing the audit repository, performing discovery and automating lineage. There is visibility through the Cloudera Navigator, covering all of the above requirements. (Image below).

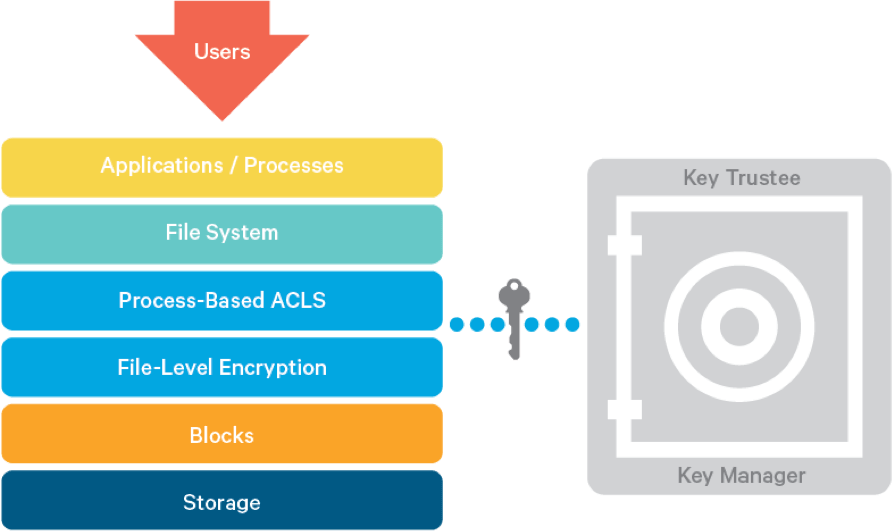

Data security requirements protect data in the cluster from unauthorized visibility and meet compliance requirements for PCI, HIPAA, FISMA, FERPA. Data security segregates data from privileged user accounts including system administrators and protects storage from theft or improper disposal. The data security pillar includes data encryption, conforming to key management policies, and integration with existing Hardware Security Module as part of key management infrastructure. Part of the data security requirements is the Navigator Encrypt.

Cloudera Navigator Encrypt provides transparent encryption for a user’s Hadoop cluster. Navigator Encrypt runs transparently to the applications running above. Data is moving between the application and data nodes while Encrypt is automatically encrypting data. All sensitive data is automatically being encrypted and decrypted as its written and read to the disk. Working in conjunction with Navigator Encrypt is Cloudera Navigator Key Trustee, a centralized key management and policy enforcement system. The Navigator Key Trustee is a “virtual safe-deposit box” with built-in audit capabilities.

Cloudera has various methods for keeping your data secure. Their security story is one that began long ago, but was accelerated by Intel in 2013, when Intel established Project Rhino. The goal of Project Rhino has been: A Framework for Unified Authorization and Encryption and Key Management.

With Garcia’s final remarks, he debuted Intel’s hardware acceleration figures. This was also a topic at the February Strata + Hadoop World in San Jose (see his keynote on Data: Open for Good and Secure by Default). Garcia concluded with security tips:

- Sensitive data doesn’t live only in HDFS (Hadoop Distributed File System); it’s important to remember other data sources, such as logs, meta-data stores, queries, and temporary map results

- Performance tuning is important, as security adds performance overhead. Use an established methodology for performance tuning, such as benchmarking, tuning and securing, and then retesting performance to note improvement.

- Lockdown from the get-go. Plan security from the start, don’t wait until you get to a production environment and find you have to re-architect to meet security requirements.

- Know the requirements of your security department. They may require an HSM (Hardware Security Module), for example, or compliance with FIPS 140-2 level 3 requires tamper evident hardware root of trust

Learn More about Cloudera here.