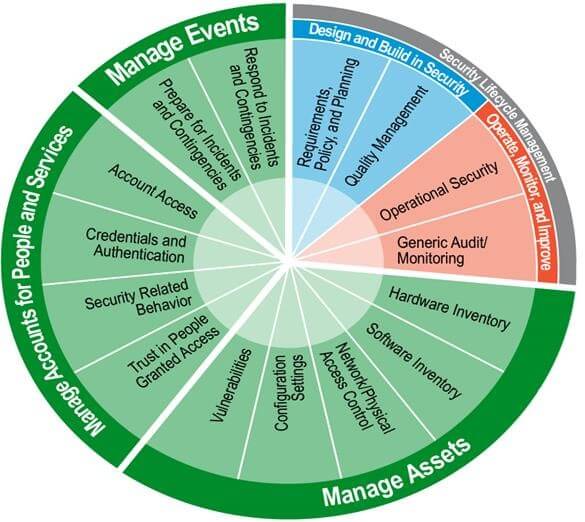

I previously wrote about the various “functional areas” of continuous monitoring. According to the federal model, there are 15 functional areas comprising a comprehensive continuous monitoring solution, as shown in the graphic below:

These functional areas are grouped into the following categories:

- Manage Assets

- Manage Accounts

- Manage Events

- Security Lifecycle Management

Each category addresses a general area of vulnerability in an enterprise. The rest of this post will describe each category, the complexities involved in integration, and the difficulties in making sense of the information. “Making sense” means a number of things here – understanding and remediating vulnerabilities, detecting and preventing threats, estimating risk to the business or mission, ensuring continuity of operations and disaster recovery, and enforcing compliance to policies and standards.

The process of Managing Assets includes tracking all hardware and software products (PCs, servers, network devices, storage, applications, mobile devices, etc.) in your enterprise, and checking for secure configuration and for known/existing vulnerabilities. While some enterprises institute standard infrastructure components, it still takes a variety of products, sensors and data to obtain a near-real-time understanding of an organization’s security posture. Implementing an initial capability in my cybersecurity lab, we have integrated fourteen products and spent considerable time massaging data so that it conforms to NIST and DoD standards. This enables pertinent information to pass seamlessly across the infrastructure, and allows correlation and standardized reporting from a single dashboard. This will become more complex as we add more capabilities and more products. In addition, the new style of IT extends enterprise assets to include mobile devices and cloud resources – so our work to understand and manage the security within this area is just beginning.

The next area deals with Managing Accounts of both people and services. Typically, we think of account management as monitoring too many unsuccessful login attempts or making sure that we delete an account when someone leaves the organization. However, if you look at the subsections of the graphic, you’ll see that managing accounts involves additional characteristics – evaluating trust, managing credentials, and analyzing behavioral patterns. This applies not only to people but to services – system-to-system, server-to-server, domain-to-domain, process-to-process, and any other combination thereof. Think about the implications of recording and analyzing behavior, and you’ll realize that any solution will require big data. Security-related behavior is a somewhat nebulous concept, but if you drill down into the details, you can envision capturing information on location, performance characteristics, schedule, keywords, encryption algorithms, access patterns, unstructured text, and more. Accounts (whether a person, system, computer, or service) use assets and groups of assets. As such, the information gleaned from the relationships and interactions between accounts and assets provides another layer of intelligence to the continuous monitoring framework.

The Managing Events category is organized into preparation-for and response-to incidents. In the cybersecurity realm, incidents can cover anything from a spear phishing attack to denial of service to digital annihilation to critical infrastructure disruption to destruction of physical plant. That covers a wide range of methods to protect an organization’s assets – and any physical asset that is somehow connected to a network needs cyber protection. The first thing to do to manage events is to plan! Backup and recovery, continuity of operations, business continuity, disaster recovery – call it what you will, but a plan will help you to understand what needs protecting, how to protect it, and how to recover when those protections fail. This is the next layer of functionality – and complexity – in the continuous monitoring framework; the functions all build upon one another to provide a more secure and resilient enterprise. The security-related data and information aggregated across these layers provides the raw materials to understand your security posture and manage your risk.

The final set of functions deal with Security Lifecycle Management. The lifecycle helps to identify the security requirements of an organization, the associated plans and policies needed to satisfy those requirements, and the processes used to monitor and improve security operations. That improvement is based on the data collected and correlated across all the other functional areas described above. Depending on the size of an organization (dozens of assets to millions of assets) and the granularity of the data (firewall alerts to packet capture), the continuous monitoring framework leads to “big security data”. Timing is also very important. Whereas today we mostly hear about cybersecurity incidents after the fact (hours, days, months, and sometimes years later), continuous monitoring operates on real or near-real-time information. The benefits are three-fold: 1) intrusions, threats and vulnerabilities can be detected much more quickly, 2) you can perform continuous authorization on your systems; typically, after the initial Certification & Authorization approval, re-certification occurs either after a major change in the system or once every two or three years, and 3) big security data can lead to predictive analytics. That’s the holy grail of cybersecurity – the ability to accurately and consistently predict vulnerabilities, threats, and attacks to your enterprise, business, or mission.

There are other benefits to this approach, besides improving an organization’s security posture because let’s face it – all the things I’ve described look like they incur additional costs. Yet after some initial investment, depending on the size of your organization and the security products you already have in your infrastructure, there are actually cost savings. At the top of the list, continuous monitoring automates many of the manual processes you have today, reduces disruptions in your enterprise, and minimizes periodic accreditation costs. This is, however, a complex undertaking. We’ve learned a lot about what works and what doesn’t as we continue to integrate products and build continuous monitoring capabilities in our lab. Feel free to contact me for best practices or if you have any other questions.

This post first appeared at George Romas’ HP blog.

Related Reading

Changing Government Requirement For Market Research to Continuous Market Assessment

This is the second installment in our series flowing from recent dialog with

This is the second installment in our series flowing from recent dialog with  On the Crimean Peninsula, Russia writes the rules because they control the territory. They control who goes in and who goes out. Anyone who wants access to the Crimea can only do so if Russia allows it. You might have a treaty that says it is not supposed to be that way, but the fact is, Russia is in charge because they control all access.

On the Crimean Peninsula, Russia writes the rules because they control the territory. They control who goes in and who goes out. Anyone who wants access to the Crimea can only do so if Russia allows it. You might have a treaty that says it is not supposed to be that way, but the fact is, Russia is in charge because they control all access.